Supporting content for SAP ECC and SAP S/4HANA data connections

The following sections can be used when configuring your connection between SAP ECC and SAP S/4HANA and the Celonis Platform:

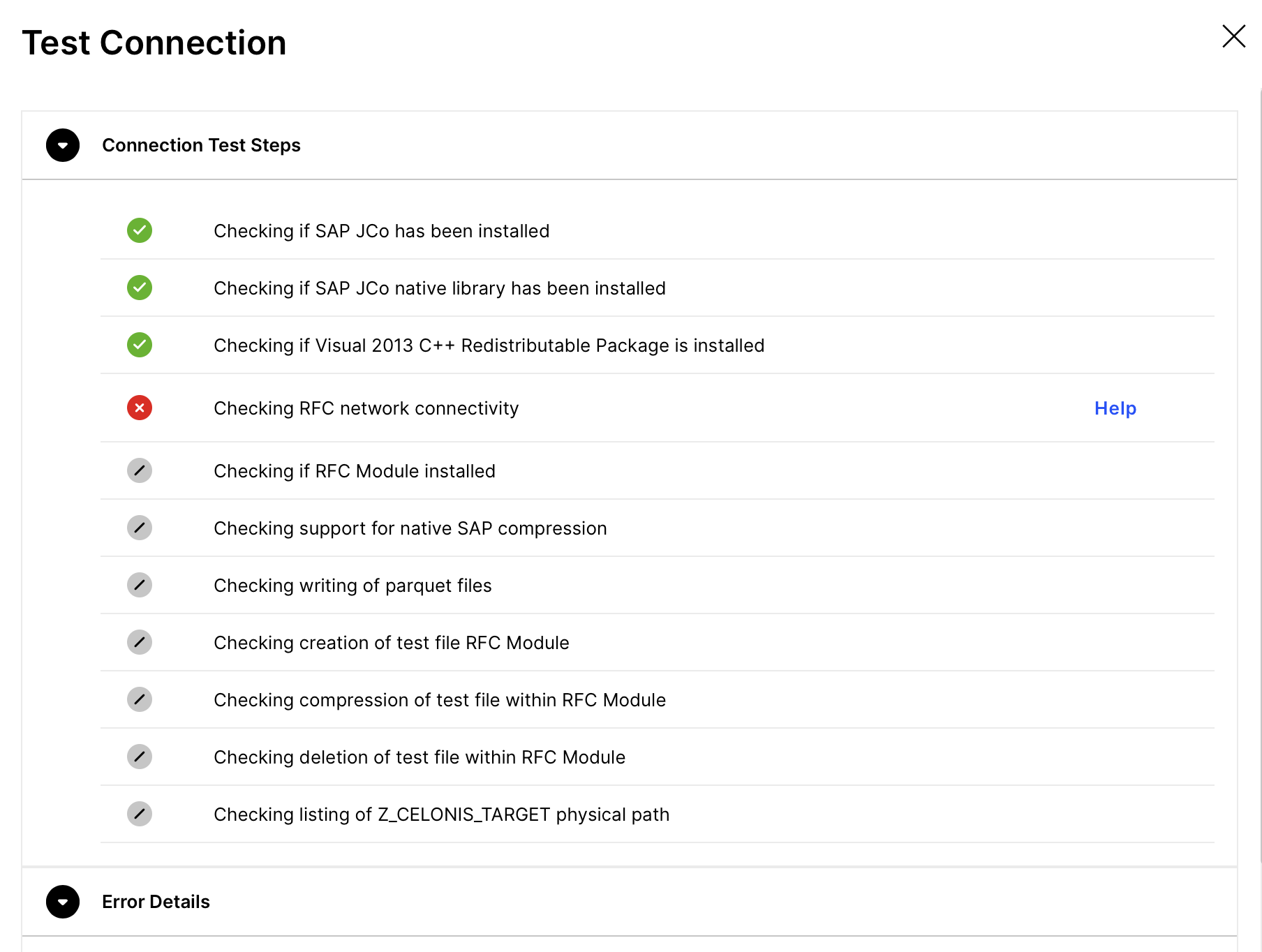

Connection Test

When you test a connection, the following steps are performed:

Check whether all the function modules exist

Write an empty file on the SAP server in the defined folder

Compress the newly created file (if compression is enabled)

Send the file to the extractor

Therefore the following aspects are tested:

Existence and completeness of the RFC module

Writing rights on the SAP server in the respective folder

Reading rights on the SAP server in the respective folder

Correctly configured compression method

Open firewall ports to send RFC call to the SAP system

Open firewall ports to send files from the extractor to the cloud endpoint

Below you can find the errors that may cause the connection test to fail and the respective solution(s), grouped by the connection checklist task.

When a checklist task fails in the SAP connection test, you can click on the button Help, to navigate to the relevant solutions in this page.

|

SAP JCo not found on classpath, ensure 'sapjco3.jar' exists

Solution

The JCo library Java part (sapjco3.jar) needs to be placed within the 'jco' folder of the extractor. You can download the respective package from the SAP Marketplace.

Note: This step should be performed by someone who has an SAP Service User (S User), which authorises to download software from SAP portals. Usually the SAP BASIS has such access.

A restart of the Extractor is required after the installation.

SAP JCo native library not found

Solution

The JCo native library file sapjco3 (.dll / .so / .sl / .dylib) needs to be placed within the 'jco' folder of the extractor. You can download the respective package from the SAP Marketplace.

Note

Only an SAP Service User (S User) can download software from SAP portals. Usually, a customer's SAP BASIS has this access.

The downloaded jco folder already contains two files: a Java part “sapjco3.jar”, and an operating system-specific part e.g. sapjco3.[.dll | .so | .sl ].

Copy the Library files into the external folder located at:

{installation_folder}/shared/libraries/external.

The Celonis Agent is configured to read the library from this directory.

Note

After setting up the jco, the Windows Service Celonis Agent needs to be restarted for the jco related changes to take effect.

Unsupported operating system for SAP JCo native library

Solution

The JCo native library file sapjco3 (.dll / .so / .sl) is not supported by your operating system. Make sure to place the correct library file for your respective operating system.

A restart of the Extractor is required after the installation.

Solution

The Visual 2013 C++ Redistributable Package needs to be installed on the server.

A restart of the Extractor is required after the installation.

Solution

Check if the Host and the System Number are specified correctly in the Data Connection.

If you are using Message Server as middleware, check if the Message Server Host and the Message Server Port are specified correctly.

Check if the following network connectivity requirements are satisfied:

Source System | Target System | Port | Protocol | Description |

|---|---|---|---|---|

On premise extractor server | SAP ECC system | 33XX (where xx is the system number) | TCP | RFC connection from on premise extractor server to the SAP system. The system number can be retrieved from the SAP basis team. |

Solution

Please check the specific error message that is provided in addition.

If this does not help, open a support ticket.

Solution

The Celonis RFC user is missing required authorizations. Make sure you have downloaded the dedicates user role from the Download Portal.

Afterwards follow these steps to apply the correct role to the technical user of the RFC connection:

Run the transaction PFCG

Go to Role → Upload

Select the provided role file (CELONIS_EXTRACTION.SAP)

Apply the uploaded role CELONIS_EXTRACTION to the RFC user

If you wish to set up the user yourself, make sure that the user has the following permissions:

Permission to write on the server hard disk under the path specified in step B

Permission to execute all RFC modules contained in the transport imported in step A (package name /CELONIS/DATA_EXTRACTION )

Read access to all tables that should be extracted and DD02L and DD03L for retrieving the table and column names

Solution

You have not installed the Celonis RFC Module or you have an outdated version in place.

For all the installation files, go to the Download Portal.

Solution

The minimum requirement is RFC Module version 1.6

For all the installation files, go to the Download Portal.

Solution

The called RFC function resulted in an internal error.

You can check the specific error message. If that doesn't help to fix the error, open a support ticket.

Your RFC module version does not support usage of native SAP compression. Please update your RFC moduleto use this feature

Solution

The minimum requirement is RFC version 1.6. As Extractor version 2019-11-12is required.

For all the installation files, go to the Download Portal.

In case an upgrade is not possible, you can switch to gzip compression.

Checking writing of parquet files

Solution

The Extractor Service is lacking permissions to write to the 'temp' folder in the extractor directory.

If the permissions are fine, open a support ticket.

Solution 1: (Z_CELONIS_TARGET is set to its default value):

Create a dedicated directory on your SAP server (preferably a network drive) and make sure that the user running the SAP system has reading and writing access to it

Warning

In case you are using Logon Groups, or there are multiple application servers where the extraction jobs can run, make sure that the directory is on a network drive, and is accessible from all the servers.

Run the transaction FILE in your SAP system

Find the Logical Path Z_CELONIS_TARGET in the list

Edit the entry by clicking on the button to the left of the new entry and then double-click on the folder "assignment logical and physical paths"

Set a path for UNIX and/or Windows (shown in the screenshot on the left); the path should be the directory on the system or a network drive that you created in step 1

Solution 2:

The logical path Z_CELONIS_TARGET is not accessible from the application directory.

If you are using load balancing, please make sure to use a network drive instead of a local directory.

Solution

The Celonis RFC user is missing required authorizations.

Afterwards follow these steps to apply the correct role to the technical user of the RFC connection:

Run the transaction PFCG

Go to Role → Upload

Select the provided role file (CELONIS_EXTRACTION.SAP)

Apply the uploaded role CELONIS_EXTRACTION to the RFC user

If you wish to set up the user yourself, please make sure that the user has the permissions below.

Permission to write on the server hard disk under the path specified in step B

Permission to execute all RFC modules contained in the transport imported in step A (package name /CELONIS/DATA_EXTRACTION )

Read access to all tables that should be extracted and DD02L and DD03L for retrieving the table and column names

Test file permissions failure

Solution

Please make sure that the operating system as well as the SAP system have reading and writing permissions for the logical path Z_CELONIS_TARGET.

Test file compression failed

Solution

Make sure the command (for your compression method) is configured in SM69. Check if the command is valid and that an executable exists.

Compressed test file not found

Solution

Check if the command (for your compression method) configured SAP transaction SM69 is configured correctly.

Test file deletion failed

Solution

Make sure to download the Celonis User Role from the Download Portal and add it to the RFC user.

Z_CELONIS_TARGET is set to its default value. Please check there is enough space in this location, and if you have multiple servers configure it to a network share

Solution

Create a dedicated directory on your SAP server (preferably a network drive) and make sure that the user running the SAP system has reading and writing access to it

Warning

In case you are using Logon Groups, or there are multiple application servers where the extraction jobs can run, make sure that the directory is on a network drive, and is accessible from all the servers.

Run the transaction FILE in your SAP system

Find the Logical Path Z_CELONIS_TARGET in the list

Edit the entry by clicking on the button to the left of the new entry and then double-click on the folder "assignment logical and physical paths"

Set a path for UNIX and/or Windows (shown in the screenshot on the left); the path should be the directory on the system or a network drive that you created in step 1

Solution

The application server cannot access Z_CELONIS_TARGET.

Make sure to download the Celonis User Role from the Download Portal and add it to the RFC user.

On average, you should expect that the following extraction throughputs:

Large tables - such as BSEG or 100k records/minute.

Mid size - such as EKKO, VBAK, or 250k records/minute.

However, these numbers are just an approximation and the actual duration can vary significantly depending on the source system. To understand the variance, it is important to understand the overall flow and services involved:

The extraction is based on an SQL query, which is executed at the database level.

The output is stored in the SAP memory.

From memory, the data is written to .csv files.

These .csv files are then fetched by the On-Premise-Client to be uploaded to the cloud.

A problem in any of these steps will impact the performance of the overall runtime. The following are the most common factors that can impact performance:

Database and SAP server capabilities

The more resources the database and the application server have, the faster the extraction query will run.

Source system load

Running the extractions during the busy hours will lead to slower performance since there are more requests competing for the same resource pool.

Complexity of the applied filter and table size

This is a very important and often neglected factor. If you apply a filter to non-primary keys, the runtime will be way slower than with a PK-based filter which is indexed in the database. The table size also plays a big factor, especially with non-PK filters. Extracting 1 million records out of 100 billion won’t have the same duration as extracting 1 million out of 100 million.

Size of the table

Depending on the business, the size of the same table can vary significantly. This will lead to different runtimes for the same table, even if the number of extracted records is the same.

Network bandwidth between the OPC and SAP Servers

OPC fetches the files from the SAP server, and if the network has a low bandwidth, this will impact the data transfer and consequently the overall extraction speed.

When upgrading from SAP ECC to S/4 HANA, the Celonis data pipeline may be affected, particularly in the Extraction and Transformation layers. Below, we explore various scenarios and their expected impacts.

Celonis RFC Extractor

The RFC module is an ABAP application that is compatible with both SAP ECC and S/4 HANA. It uses no version specific functionality and runs seamlessly in both systems without any migration. The only exception is when the Real-Time Extension is activated, in which case a migration is needed.

Real Time Extension is not active

Minimal migration effort is needed in this scenario. The RFC Module is used only for batch-based extractions and there are no Celonis change logs or triggers installed in SAP.

In this scenario follow these steps:

Confirm the successful transfer of the RFC Module in S/4 HANA. Search for the package /CELONIS/* (the full name varies depending on the extractor version).

Set up the target path Z_CELONIS_TARGET as explained in Installing RFC Module.

Check that the Celonis user exists in S/4 and has the appropriate permissions.

Real Time Extension is active

In this scenario, change log tables and triggers installed in SAP ECC need to be recreated in S/4 HANA to accommodate table schema changes. Recreating them will resolve this issue. In general, we advise that you perform the “Real Time Extension” setup from scratch by following the steps in 2. Set up and Configure the Real-Time Extractor.

Warning

In S/4 many tables have been replaced with compatibility views, i.e. MSEG. Since they no longer physically exist in the database, you won’t be able to install triggers on them. To extract these tables in real time you need to switch to the replacing table, i.e. MATDOC instead of MSEG.

Extraction and Transformation configuration in the Celonis Platform

S/4 HANA comes with compatibility views so that every deprecated SAP ECC table has a corresponding view in S/4. As a result, old integrations won’t break and can also be run against S/4. This also applies to the Celonis Platform, meaning that you can keep running the same Extraction=>Transformation against S/4 and expect the same dataset.

However, if the real-time extension is active and you have to replace tables that don’t exist anymore, then you have to modify the Extractions as well. In the MSEG example, you need to replace it with MATDOC. You can also rename it to MSEG during the extraction in order to keep the transformations intact downstream.

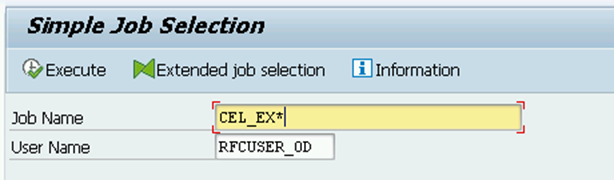

When starting an extraction in the Celonis Platform, every table being extracted maps to one background job in the SAP system.

The number of parallel extractions (and therefore background jobs) can be modified in the connection details of the data connection.

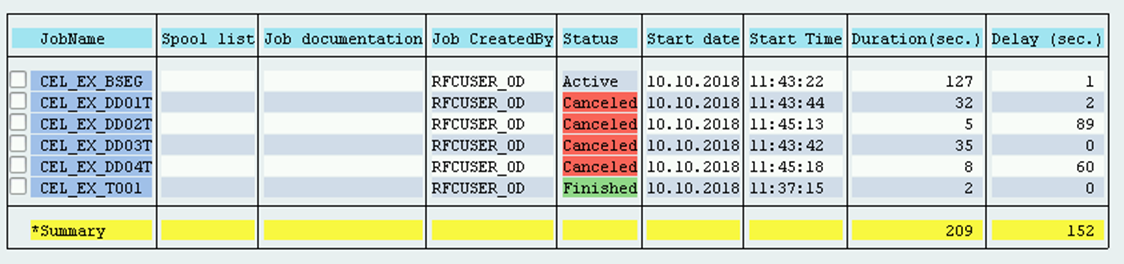

To see the jobs created by the Celonis Platform in SAP, you can go to run transaction SM37. To find the correct jobs, enter the prefix of the created job names (CEL_EX*) into the job name field and the name of the Celonis RFC user in the user field.

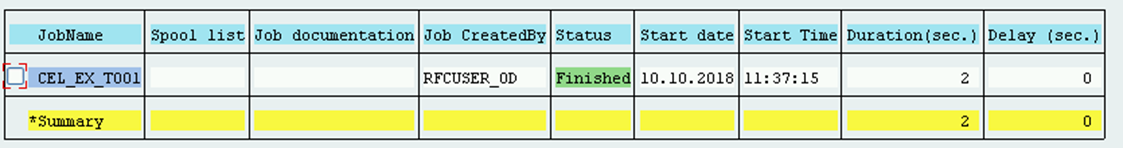

If one job on this day already ran, you may see a listing like on the left.

It shows the successful extraction of the table T001 from the Celonis Platform.

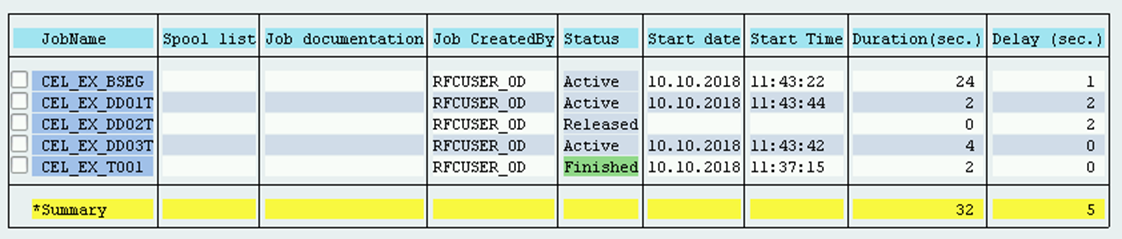

In this case the BSEG was running from one Data Job, the DD0* Tables from another job.

When cancelling one of the data jobs, the overview may look like the following.

The job extracting the DD0* Tables was cancelled from the Celonis Platform, the job extracting the BSEG is still running.

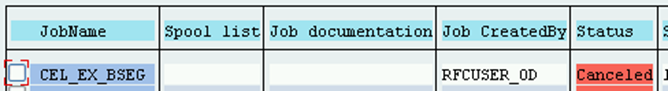

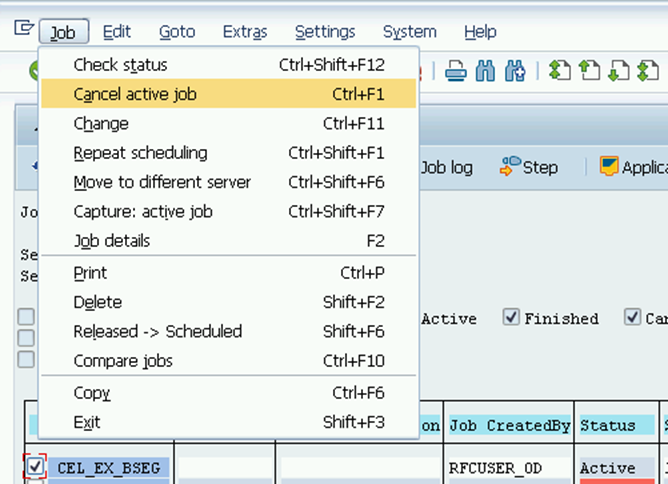

Cancelling a Job from the SAP System

If we want to cancel a job from the sap system, we can simply do so from the transaction SM37.

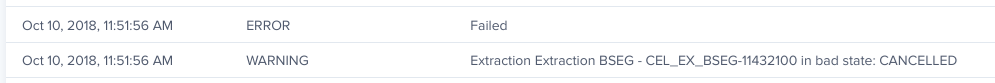

In the Celonis Platform, we can look at the job log and see something like the picture on the left. So the cancelling in SAP is picked up by the Celonis Platform and the Data Job is set to failed.

To remove the Celonis Extractor from SAP system perform the following steps:

(Optional) Stop all replications and/or the extraction data jobs from the Celonis cloud.

(Optional) If the Real-Time Extension is active:

Delete the Triggers via the transaction code /CELONIS/CLMAN_UI in DEV, QA and PROD;

Delete the Change Log tables in DEV and then move the transport forward to the QA and PRD systems;

Remove the CEL_CL_CLEAN_UP_SCHEDULED clean up job, if it was set up, in all systems.

Import the latest “Uninstall transport” for the RFC Module into SAP. To get the module, contact at the Support Portal.

This transport removes all objects shipped in the RFC Module.

Important

We don't recommend using third-party tools for managing import SAP transports before moving them to Celonis Platform.

Verify that the /CELONIS/namespace folder is empty.

Delete the Celonis user and role from SAP.

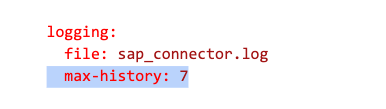

We are generating and storing detailed extraction logs on the Extractor server locally. By default they are stored in the root folder where the file connector-sap.jar is located. A new log file is generated on a daily basis, and with the time the number of logs can grow up significantly. To prevent this, the user can set up a retention period in the file application-local.yml, and the older logs will be cleaned up automatically.

In the file application-local.yml, add the following line in the "logging" section, as shown below. Make sure that the spacing is correct.

Restart the Extractor service

Note

The feature is available only in the On-premises clients (OPC). The uplink-based on-premise extractor does not support this.

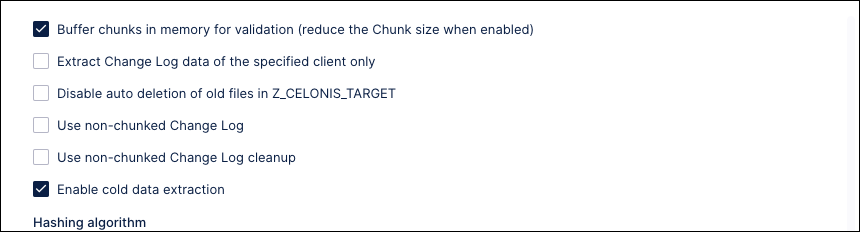

SAP S/4 HANA offers functionality to archive old data and free up working memory. The table is partitioned based on the age of the data. The aged data is moved to the persistent memory and is not available unless it is explicitly invoked.

The RFC module has been enhanced to allow the extracting of this aged data. Go to Data Connection > Advanced Settings and enable the "Enable cold data extraction" option to make the aged data available for extraction along with the current data.

|

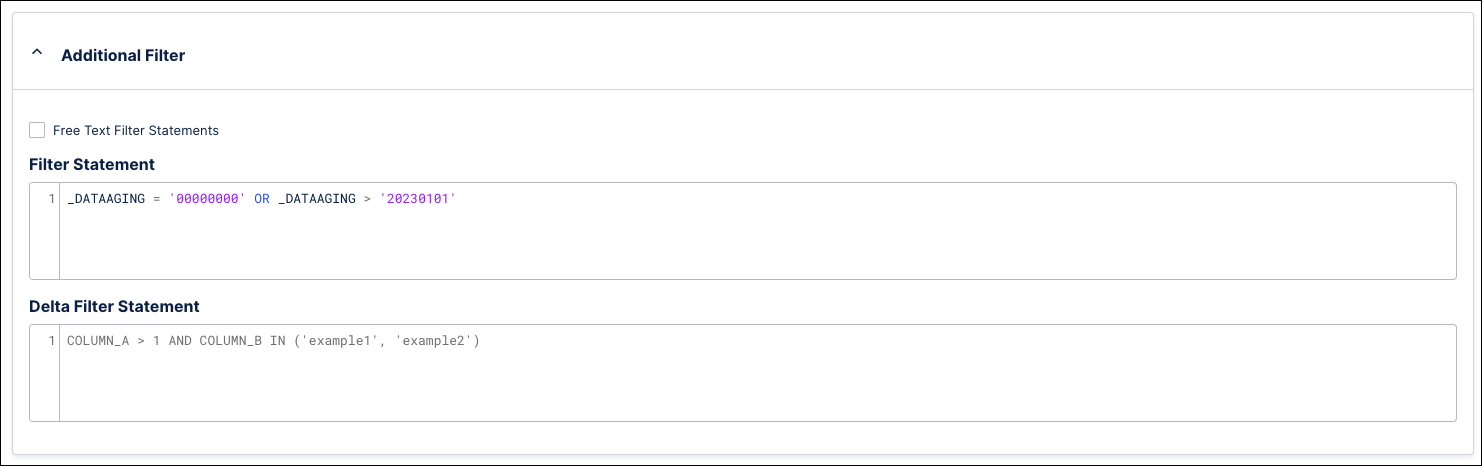

You can use the _DATAAGING column to filter the aged records based on their date. The filter for the current data is _DATAAGING = '00000000', so be sure to include this in the filter and avoid excluding it from the extraction. For example, the filter in the screenshot below will extract all the current data (_DATAAGING = '00000000') AND any aged data newer than 01-01-2023 (_DATAAGING = '20230101').

|

Note

The feature is available only in the On-premises clients (OPC). The uplink-based on-premise extractor does not support this.

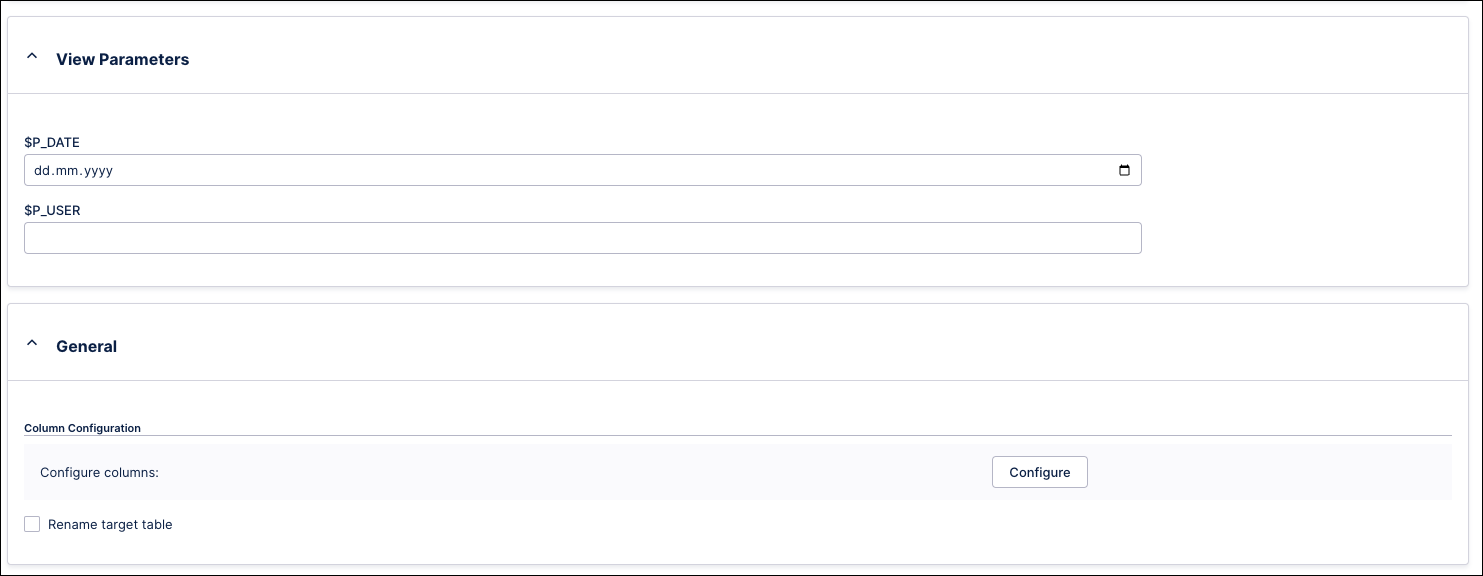

CDS views can require parameters that are passed during the execution and typically applied as filters. The Celonis SAP Extractor supports the extraction of parameterized CDS views. If the user selects this type of CDS view, the parameters will be rendered in a dedicated section of the Table Extraction configurations. Make sure to define values for all required parameters or else the extraction will fail in the SAP layer.

Tip

Only static values are supported, so dynamic parameters cannot be used as inputs.

|

With Celonis Platform, you can extract data from SAP tables. See the following section to learn more about how data is extracted and converted from different tables.

In the AR process, the credit check activity plays an important role. Typically the events are stored in CDPOS and can be extracted without any hassle. However, in S/4 HANA if the customer is using the advanced credit management module, then the outcomes of the credit check are stored in the BALDAT table. This complicates the extraction because BALDAT stores the data in an LRAW data type which is not extractable by our standard process.

Celonis has created an ABAP program which decrypts the column CLUSTD and stores the converted values in a new table which can be extracted as any other standard table from SAP.

Conversion logic and setup for the BALDAT table

The conversion is done using the program /CELONIS/RP_BAL_CONVERT. It calls the built-in SAP function BAL_DB_CONVERT_INTO_VER0000, which:

reads the records from table BALHDR

looks up their respective logs from the BALDAT

converts and writes the records in a new table /CELONIS/BALDAT.

The conversion process is resource-consuming, so we recommend creating a variant and scheduling a background job. The conversion supports two modes - full and delta. The full mode processes all the records without applying any timestamp filters. It is expected that the customers will run this for the initial conversion. Afterwards, they should schedule a job to run in delta mode. In this mode, the program processes only the records added after the last conversion run. The concatenation of columns BALHDR.ALHDATE + BALHDR.ALTIME is used to filter out the new records that have been added after the previous conversion run.

The transports can be downloaded from the Download Portal.

From version 4.5A, SAP no longer stores the MRP lists in the table MDTB. Instead, the data is compressed and stored in the cluster table MDTC, specifically in the CLUSTD field.

Unlike the common table, MDTC cannot be extracted via the standard SAP extractor. Celonis has developed a special program that converts MDTC into a standard table which can be extracted using the SAP extraction client.

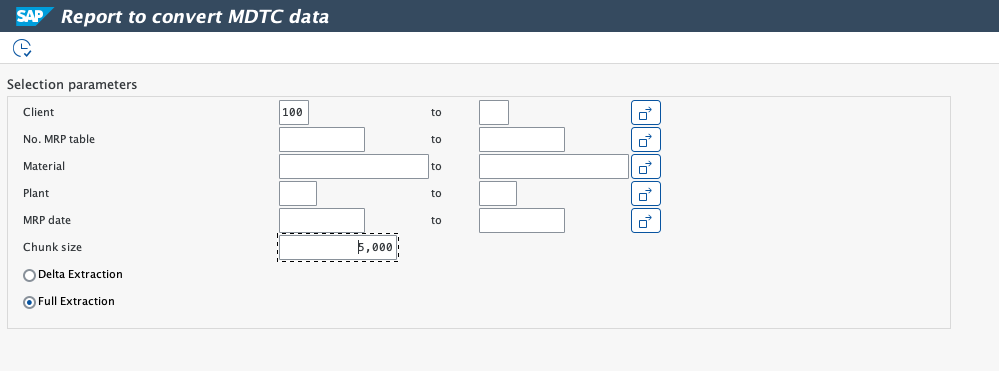

Conversion logic and setup for the MDTC extraction

The conversion is done via the program /CELONIS/RP_MDTC_CONVERT. It calls the built-in SAP function READ_MRP_LIST, which:

reads the records from table MDKP which has the Header Data for MRP Document

looks up their respective logs from the MDTC

converts and writes the records in a new table /CELONIS/MDTB.

The conversion process is resource-consuming, so we recommend creating a variant and scheduling a background job. The conversion supports two modes - full and delta. The full mode processes all the records without applying any timestamp filters. It is expected that the customers will run this for the initial conversion. Afterwards, they should schedule a job to run in delta mode. In this mode, the program processes only the records added after the last conversion run. The column MDKP.DSDAT is used to filter out the new records that have been added after the previous conversion run.

The transports can be downloaded from the Download Portal.

The SAP table STXL contains encoded data stored in the CLUSTD column. This data is not human-readable unless decrypted, and since our standard RFC module reads the text from the DB without any conversion, it is not suitable for this use case. The data is important for some business use cases, and we need a solution to fetch it into Celonis Platform as a readable text.

Rather than extending the RFC Module functionality, we have developed a separate program (the attachment is at the end of the page) that decrypts STXL into a readable format using the native SAP function READ_TEXT, and then writes this data into another table - /CELONIS/STXL.

This table can be extracted afterward using our standard RFC Extractor.

The converter program is distributed in the package ZSTX_CONVERT. It contains the following objects:

Table /CELONIS/STXL - stores the converted data is written

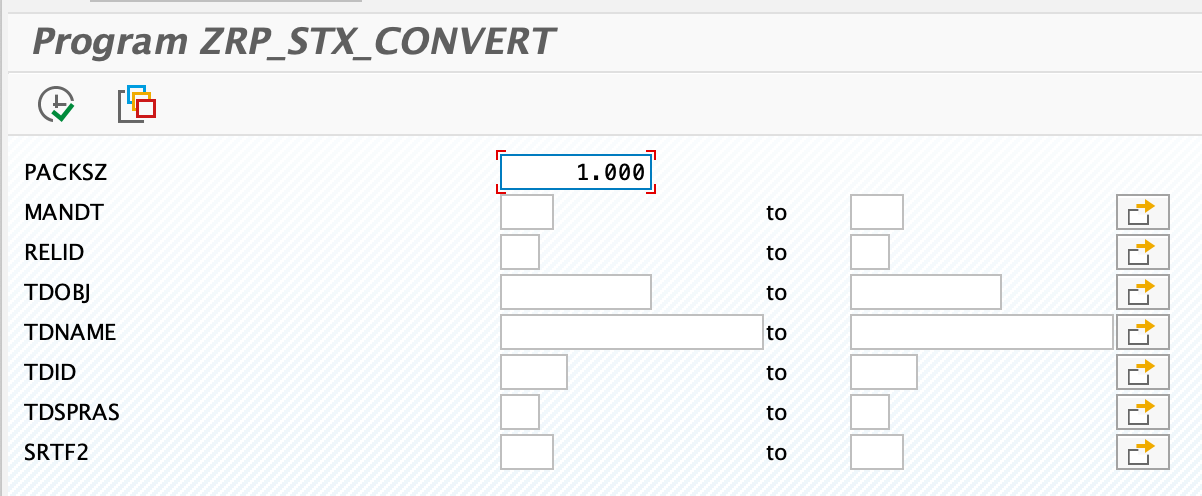

Program /CELONIS/RP_STX_CONVERT - performs the conversion.

The package is distributed as an SAP transport which should be imported into the SAP system. The user can create a variant of the report /CELONIS/RP_STX_CONVERT, and then execute it regularly via an SAP Background Job to convert the STXL. The running interval is up to the user, and is based on how frequently they want to refresh this data.

The report supports several parameters. The parameter PACKSZ defines the number of rows to process in memory, and the other parameters allow filtering the processed data.

|

Download STXL conversion file

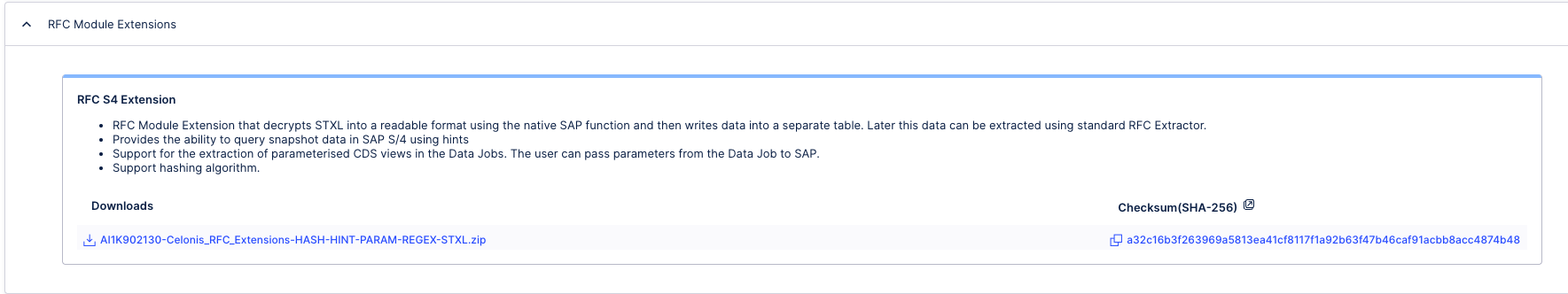

As an admin of an Celonis Platform team, you can download the STXL conversion zip file from the Download Portal.

Click Admin & Settings > Download Portal and then expand the RFC Module Extensions content:

To avoid system slowdowns and instability due to high memory usage during SAP data extraction, you can control the consumption on the application server level or the database level.

Managing memory consumption on the Application Server level

Active SAP processes, including Celonis extractor jobs, consume the application server memory. Here are some profile parameters responsible for managing memory in SAP that you might want to set:

abap/heap_area_dia: sets the heap memory limit for dialog work processes.abap/heap_area_nondia: sets the heap memory limit for non-dialog work processes, such as background jobs.abap/heap_area_total: defines the cumulative heap memory limit for all work processes.abap/heaplimit: specifies the maximum private memory allocation for a single work process before it is restarted.

Note

All changes made to SAP memory usage should be consulted with the SAP Basis team.

Managing memory consumption on the database level

Database memory is consumed during the execution of queries by the Celonis Extractor. This can represent a significant load, particularly with large datasets. You can control the memory usage with the following parameter:

statement_memory_limit: this parameter restricts the memory allocated to a single SQL statement. This is critical for preventing individual queries from monopolizing database resources, especially in SAP HANA environments.

For more information, see SAP documentation.